Computing Resource

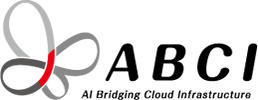

ABCI consists of; 766 Compute Nodes (H) that form in total 6,128 NVIDIA H200 GPU accelerators, the shared file system and object storage that provide in total 75PB capacity, high-speed InfiniBand network connecting the compute nodes and the storage systems, firewall equipments, and etc.

For information on the computing resources of ABCI 2.0, please see Computating Resource (ABCI 2.0).

ABCI System Outline

Features

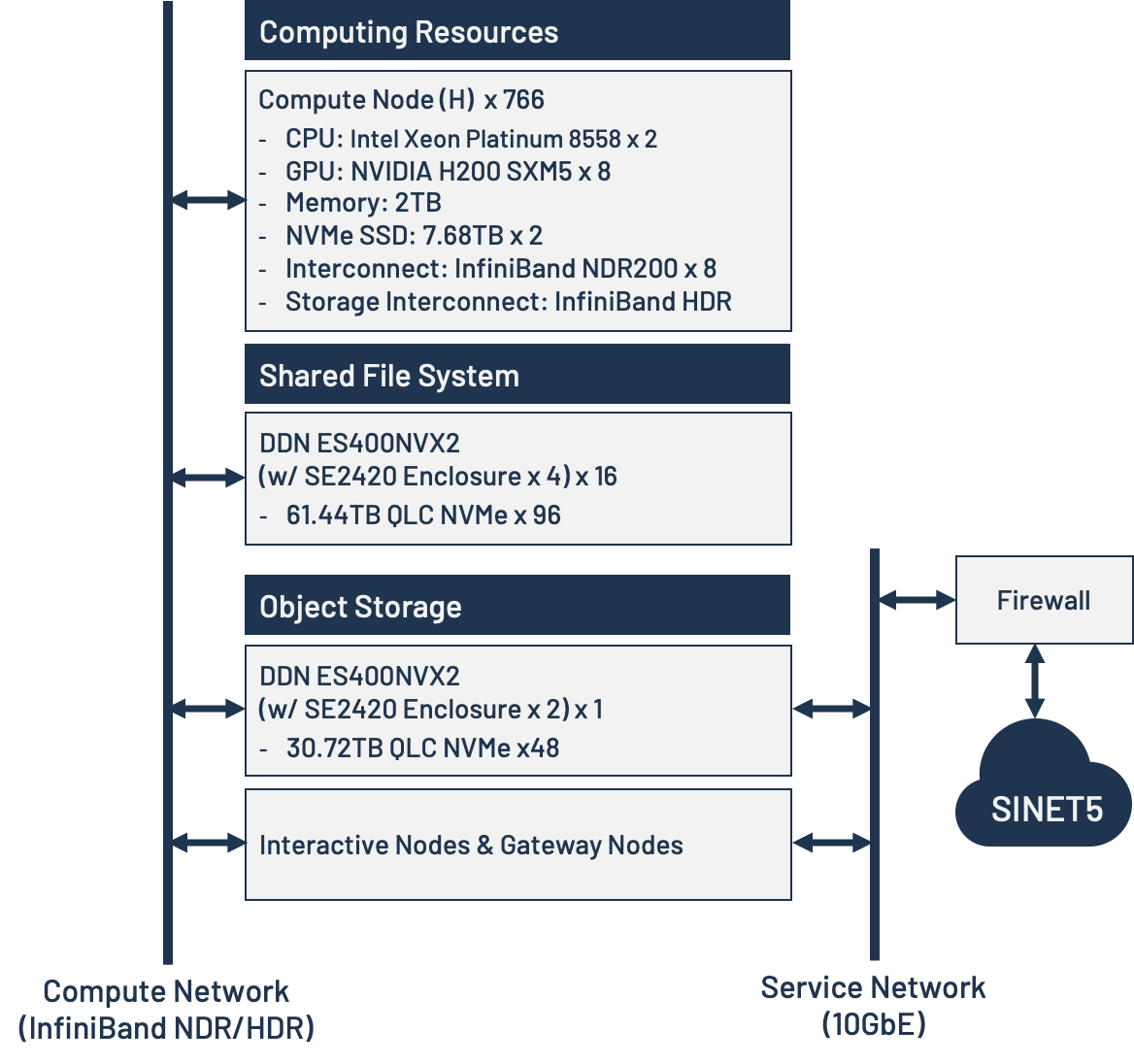

Compute Node (H)

- Compute Node (H) has eight NVIDIA H200 GPU accelerators, two 5th-Generation Intel Xeon Scalable Processors (namely Emerald Rapids), two NVMe SSDs, eight InfiniBand NDR200 (200Gbps each), and one InfiniBand HDR for storage access.

- The theoretical performance of Compute Node (H) is 8,122 AI-TFLOPS for half precision (required for AI machine learning) and 542 TFLOPS for double precision (required for scientific and technical computations).

- The total theoretical performance of Compute Nodes (H) is 6.2 AI-EFLOPS (half precision) and 425 PFLOPS (double precision).

HPE Cray XD670 (1 server in 5U)

| CPU | Intel Xeon Platinum 8558 Processor (260 MB Cache, 2.1 GHz, 48 Cores, 96 Threads) ×2 |

|---|---|

| GPU | NVIDIA H200 SXM5 141GB HBM3e ×8 |

| Memory | 2TB DDR5-4800/5600 |

| Local Storage | 7.68TB NVMe SSD ×2 |

| Interconnect | InfiniBand NDR200 (200Gbps) ×8 |

| Storage Interconnect | InfiniBand HDR (200Gbps) ×1 |

Storage Systems

The ABCI system has five storage systems for storing large amounts of data used for AI and Big Data applications, and these are used to provide the shared file system and object storage. The shared file system is configured as a fast distributed file system using Lustre and has an effective capacity of approximately 73PB. The object storage is an object storage service with an Amazon Simple Storage Service (Amazon S3) compatible interface and has 1PB of physical capacity available.

High-Speed Interconnect

Compute Node (H), the shared file systems, and the object storage are interconnected by high-speed InfiniBand network. Compute Nodes (H) can communicate with each other in full-bisection bandwidth.

Interconnection Network

Since ABCI is connected to SINET6 (400Gbps), ABCI users may access ABCI through the internet. The connection is secured by firewalls and two-stage authentication is adopted.